Greetings from Roll the Bones! Here we muse about complexity, learning, epistemology, accomplishing goals in complex environments, and whatever else I might be in the mood to discuss.

One thing I want to drive home is that life is not a game. The only simple, widely-understandable rules it follows are given by God Himself. In all other aspects, things are often more complex than they appear.

Personal Note

I’ve written a book! It’s an introductory text on coping with complexity and uncertainty. If you’re curious, please take a look. If you like it, please share. If you buy it, please read. If it helps you, please pay it forward and help someone else.

Gumroad: https://gum.co/notesoncomplexity

This Week’s Links

In this issue, I’m sharing some of my readings as I think about how AI/automation/whatever you want to call it can behave more as a “team player” in complex environments, more properly cooperating with people. In studying this, software users must ask a question: “what is this software’s idea of who I am?”

I’ve been thinking about self-driving cars lately. Can you tell? Each paper can be related to them in one way or anther.

There are no affiliate links here, just things I’ve been reading. None of the authors have any idea their work is about to be featured.

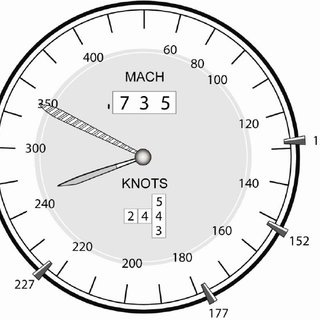

How A Cockpit Remembers It’s Speeds

Edwin Hutchins takes a distributed thinking system, rather than a person, as his unit of “cognitive analysis” in this groundbreaking paper from 1995. He is careful not to drown the reader in technical details from examples (aviation) and walks a clear, understandable path between the study of “people thinking” and “system thinking” to better understand how they operate as a whole.

My thoughts:

Keeping track of speed is necessary for survival in Hutchins’ examples - too low a speed in many wing conditions will make you fall out of the sky, while a high speed close to the ground is just asking for trouble. This is a convex or fragile measure (only good when not too hot, not too cold) whose “just right” changes based on the situation. You can ask that of any threshold for anything you measure. Is a C “good enough” in school? It depends.

Studying a system, rather than a person, means you can pick apart and observe some parts of the system rather than just guessing what’s going on inside the brain. In this cockpit example, the dashboards and tools and books and procedures are all there to be seen. Some of the thinking has been “externalized”.

Some of the pieces of equipment he describes remember things so that the pilots can focus on other work. This is not helping the pilot’s memory - it is replacing it for this task. The system (cockpit) remembers important facts about safe/desirable/etc. speeds, but that burden is distributed across more than the humans and has redundancy/error correction built in.

New Wine in New Bottles

This is the paper in which David Woods and Erik Hollnagel introduced “Cognitive Systems Engineering”, the predecessor to what’s now called “Resilience Engineering”. It leads with some strong thoughts on how intelligent, non-human components of a system should be aware of their role in a broader system. I wish that AI was good at that today. Maybe we can change that.

My thoughts:

As previous issues have discussed, systems are not just the sum of their parts. This is also the case for thinking systems. They are not just the thinking humans, but other parts as well that contribute to the “cognitive work”. This becomes more obvious every day thanks to AI journalism.

Models are useless but modeling is indispensable. Think about the system and it’s behavior.

“It is obvious that the system as a whole will function differently when the distribution of tasks between the machine and the operator is 95:5% as opposed to, say, 80:20% and the later alternative is not necessarily less efficient than the former”. Boredom, stress, being an active part of the control loop are all significant. Current models of self-driving seem to want to remove people from the control loop as much as possible - that’s the advertised feature!

“The need for the operator to intervene directly in the process is much reduced, but the requirements to evaluate information and supervise complex systems is higher.” Every single thing that’s automated does this. What new burdens do they place on people? It’s awfully rude for software to be so inconsiderate of others.

“I Want To Treat The Patient, Not The Alarm!”

Karen Raymer’s Master’s Thesis is from the medical field but underscores a similar point about the mismatch between an alarm system’s image of what its human operators can and will do and the reality of what it’s like for people to use these systems.

My thoughts:

When we say an inanimate object has an “image” of its operator, we mean that the designers made certain assumptions about the operator and those are reflected in the design of the device. This is expanded upon in the “New Wine…” paper above.

A zero false-negative rate can lead to an intolerable false-positive rate. In my case, I’ve been woken up in the middle of the night or interrupted at work more times than I can count for alerts that turned out to be…nothing. When an alarm becomes more noise than signal, you get Aesop’s Fable about The Boy Who Cried Wolf, and consequences ensue.

We measure things indirectly because we often cannot measure them directly (internal temperature of meat as a proxy for “safe to eat”, for example). Trouble ensues when we forget this, and when our behavior drifts to over-optimize for the direct thing we measure instead of the real reason we care.

“Clumsy technology” is like a clumsy nurse in the operating room. It doesn’t matter how good technology is at it’s best if it turns out to be downright dangerous at other times. Let us teach our technology to be less clumsy, and let us start by teaching it whose shoulder it can lean on. Let us be benevolent creators.

Thank you for reading and engaging.

I appreciate you taking the time to read this newsletter. It’s free, but if you want to help support me you can always make a one time donation.

Engage with me on Twitter at @10101Lund about these or any other topics. If you find an error in this newsletter, please let me know and I’ll correct it. Archives of this newsletter are here.

Tell a friend. See you next issue!